838 DL float MAC

838 : DL float MAC

- Author: Ananya P & Nidhi M D

- Description: MAC unit for 16 bit DL float data type

- GitHub repository

- Open in 3D viewer

- Clock: 40000000 Hz

- Feedback: ✅ 1

Design Description

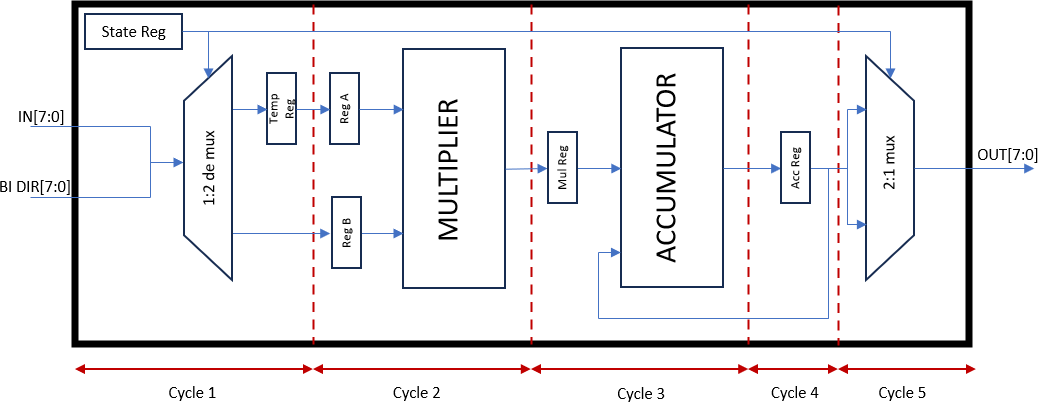

The digital design is a 5 stage pipelined architecture implementation of MAC Operation for 16 bit DLFloat numbers. It includes two FSMs (Finite State Machines) at the input and output ports.

Details of DLFloats:

DLFloat is a 16-bit floating-point format designed for deep learning training and inference, where speed is prioritized over precision.

Sign bit: 1 bit

Exponent width: 6 bits

Significand precision: 9 bits

Bias exponent: 31

| Value | Binary format | Decimal Value |

|---|---|---|

| Max normal | S. 111110.111111111 | 4.29077299e+09 |

| Min normal | S. 000001.000000000 | 9.31322575e−10 |

| Zero | S. 000000.000000000 | 0.0 |

| Infinity-Nan (combined) | S. 111111.111111111 | infinity |

Work Flow Details:

-

The two 16 bit DLFloat input operands are supplied through the ui_in and uio_in (input)pins over two clock cycles getting stored in two registers.

-

In the MAC module, the first stage involves multiplying the two inputs, followed by addition of the multiplication result and the accumulated value. The accumulated value in the MAC module starts at zero upon reset.

-

After the MAC operation, the 16-bit accumulated result is pushed through uo_out pins over two clock cycles. First the msb 8 bits are pushed out followed by lsb bits.

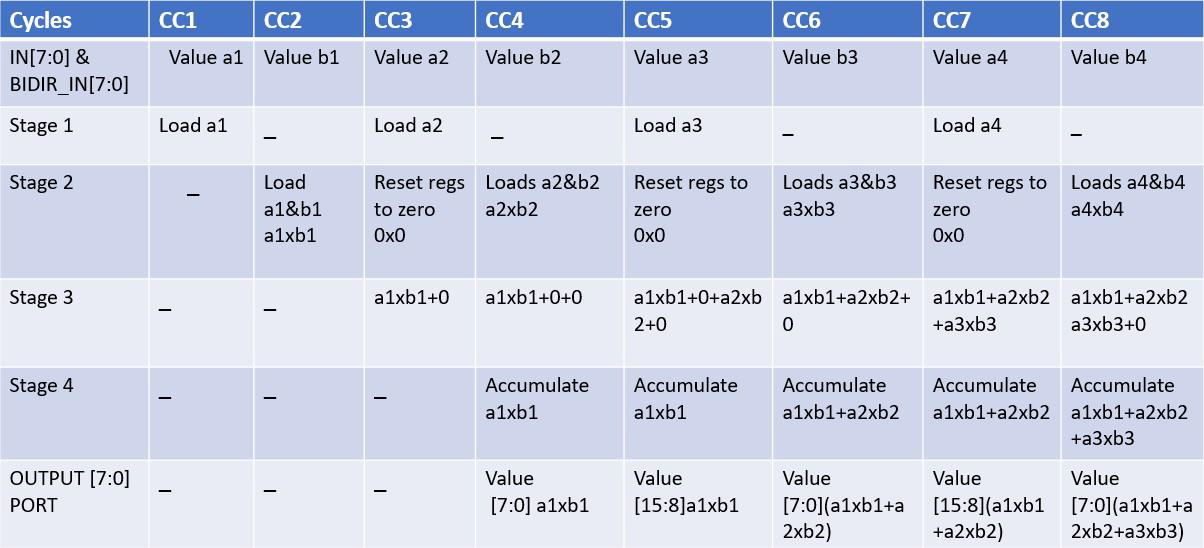

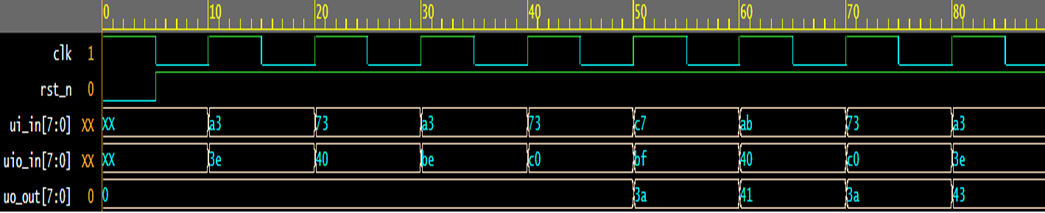

The table and the waveform below shows the working of the same:

This arrangement helps in achieving a pipelined architecture where after 5 clock cycles from reset the output values can be pushed out in every cycle.

Here the addition and multiplication follows the IEEE754 algorithm and the MAC operation incorporates handling the special cases like inf, NaN ,subnormals, zero and a full 16 bit precision range.

The Multiplier and Adder blocks also handle overflow and underflow cases with a saturation logic where upon overflow the result is pushed to the largest number that can be represented in the DLFloat format and similarly with underflow the result is pushed to smallest number with the exception that in Multiplier the underflow is pushed to zero to not affect the accumulated results.

How to test

The DLFloat inputs are fed as binary/hexadecimal equivalent of the binary floating point format. The outputs can be read in similar manner.

External hardware

An FPGA is required to drive the inputs to the device and needs to be programmed to capture and display the 16-bit result, which arrives as 8 bits over two clock cycles.

IO

| # | Input | Output | Bidirectional |

|---|---|---|---|

| 0 | FP16 in[0] | FP16 out[0]/FP16 out[8] | FP16 in[8] |

| 1 | FP16 in[1] | FP16 out[1]/FP16 out[9] | FP16 in[9] |

| 2 | FP16 in[2] | FP16 out[2]/FP16 out[10] | FP16 in[10] |

| 3 | FP16 in[3] | FP16 out[3]/FP16 out[11] | FP16 in[11] |

| 4 | FP16 in[4] | FP16 out[4]/FP16 out[12] | FP16 in[12] |

| 5 | FP16 in[5] | FP16 out[5]/FP16 out[13] | FP16 in[13] |

| 6 | FP16 in[6] | FP16 out[6]/FP16 out[14] | FP16 in[14] |

| 7 | FP16 in[7] | FP16 out[7]/FP16 out[15] | FP16 in[15] |

User feedback

- MichaelBell: I ported the cocotb test supplied with the project to microcotb and found it didn't work. This is because the timing is actually one cycle faster than the test tests for (due to a confusion about timing in the verification framework). With that adjustment made the test runs cleanly! Link for more details